What happens when your AI agent fails silently

Three weeks ago a research pipeline I run finished overnight. Clean exit. No errors, no timeouts, nothing in the logs that looked wrong. The next morning I found three new entries in the records database with scores, briefs, and supporting notes. Professional-looking output.

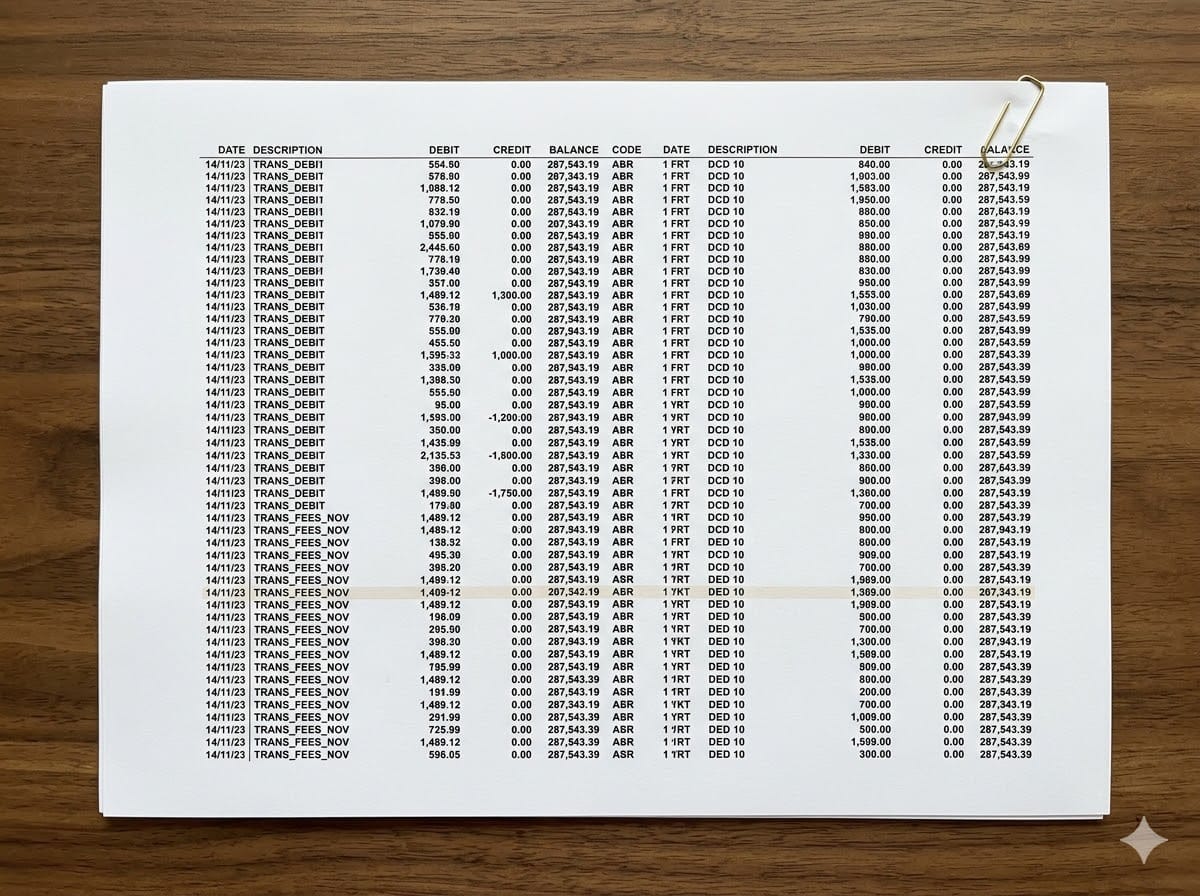

Two of the three entries already existed. The pipeline had created duplicates instead of updating the originals. I caught it because I happened to check. If I hadn't, the duplicates would have sat there quietly degrading the system's signal until I noticed something was off weeks later.

That's a silent failure. The agent completed the task. It reported success. The output looked right. And it was wrong.

This is not a personal-stack problem. It is the dominant failure pattern across agent deployments at every scale, and the gap between the observability tooling that exists and the observability that is actually wired up is wider than most architecture decks admit.

The coverage gap

Vendor decks tell a clean story. Splunk, Datadog, Galileo, Arize, LangSmith, and Langfuse all ship agent-specific tracing, drift detection, and evaluation frameworks. Major hyperscalers added equivalent capabilities through 2025 and into 2026. The platforms exist, they're well-funded, and they're improving fast.

What is harder to find in the decks: the percentage of organisations running agents in production that have those capabilities operationalised end-to-end.

LangChain's 2026 State of Agent Engineering reports that 89% of teams running agents have implemented some form of observability, but only 52% have implemented evaluations. People are watching what their agents do; far fewer are testing what those agents should do. Adobe's data tells a sharper version of the same story: 31% of organisations have a measurement framework for agentic AI in place, while 47% either have none or are unsure whether one exists. Underneath that, fewer than 10% of enterprises have scaled agentic AI to functional production maturity at all.

The platforms are deployed but the practice is not. Tools collect traces. Nobody reviews them. Drift detection alerts fire. They go to a Slack channel where the alerts get muted within a quarter. Evals are written for the demo and never updated when the model is swapped. Coverage exists in the same way that DLP coverage exists in every M365 tenant: licensed, half-configured, and trusted more than it should be.

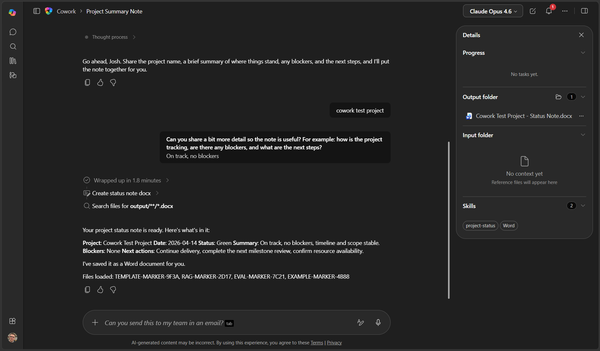

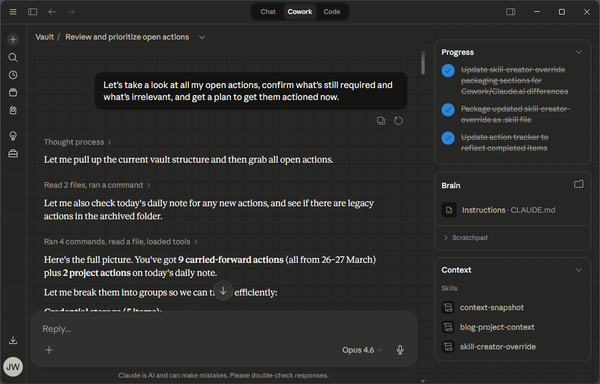

This applies whether you are running a fleet of customer-service agents, a code harness like Claude Code or Cursor, a Cowork-style assistant inside the business, or a single overnight automation. The deployment model changes. The failure mode does not.

Four patterns that slip through monitoring

These are the patterns I see most often in agent workflows, including ones that have observability platforms wired up.

Stale context. The agent uses information from a prior run that's no longer accurate. A trend-tracking pipeline once flagged a topic as "emerging" that I'd already covered weeks earlier. The scoring logic was fine. The input data was stale because the deduplication window had a gap. Tracing showed a successful run.

Skipped steps. The agent completes nine of ten steps and reports success. A draft pipeline once skipped a quality-check step entirely because the preceding step returned slightly unexpected output. The downstream consumers got results that looked correct because the schema was intact. The validation that should have caught the issue never ran.

Wrong tool selection. The agent picks a tool that partially fits instead of the correct one. A search tool returns results from the wrong index because the query was ambiguous. The downstream steps work perfectly on the wrong data. Every tool call shows green in the trace.

Confident extension in summaries. An agent's summary introduces a claim that isn't in the source material. The output looks coherent, the trace is clean, and downstream steps consume it as fact.

The shared property: the agent reports success, the output schema is correct, and the error is in the content. Standard observability stacks track tool call success, latency, and structured output validity. None of those signals catches a coherent answer that is wrong about the world.

Why this hits even monitored deployments

Three structural gaps explain why purchased tooling does not translate into caught failures.

Coverage of intermediate state. Most observability platforms log inputs, outputs, and tool calls. Few capture the agent's intermediate reasoning in a queryable form. When an agent picks the wrong tool because it misunderstood the task, the misunderstanding lives in the reasoning chain, not the tool call. IBM's 2026 observability outlook makes this point directly: current platforms track infrastructure and service-level metrics well, but lack visibility into higher-level execution context and decision flows.

Eval drift. Evaluations written at deployment rarely keep pace with model upgrades, prompt changes, or new tool integrations. The 89/52 gap from the LangChain data is the operational expression of this. Organisations hit "good enough" on tracing and stop investing in the harder testing layer.

No second pair of eyes. In a mature SRE practice, on-call engineers review alerts, dashboards, and post-incident traces. Agent observability rarely has the equivalent function. The team that built the agent owns the dashboard. Nobody outside that team is paid to notice when the dashboard goes quiet for the wrong reason.

What actually catches silent failures

These are structural patterns, not tools. They work whether you have Galileo wired up or a logbook in SharePoint.

A log somebody actually reads. The most reliable failure-detection mechanism in any agent system is a human glancing at output regularly enough to notice when it gets weird. That sounds primitive, but the operational version is straightforward. Each pipeline writes a structured run summary to a place a human reviews on a defined cadence. If the summary is suspiciously short, something went wrong. If it's suspiciously long, something went wrong differently. The review is for noticing, not debugging.

Canary checks before real work. A canary is a step that tests a known-good condition before the pipeline acts. Database reachable. Source API returning a 200. Auth token valid. If any check fails, the pipeline halts. This is the cheapest layer of defence against the "ran successfully against a stale cache because the dependency was down" failure mode.

Diff against the previous run. When a pipeline produces the same type of output on a cadence, compare structure and volume run-over-run. Format change means behaviour change. Volume swing of 3x without a corresponding input change means something shifted in the logic or the model. Flag for review.

Step counting. Have the pipeline report how many steps it actually executed against how many it should have. If the expected count is ten and the log says nine, you know which one to check. This is the simplest way to catch the skipped-step failure mode, and almost nobody does it by default.

Score the scorer. If a pipeline scores its own output, run a separate validator that sanity-checks the score. If something is rated 9/10 but contains output that violates a basic rule, the scorer failed, not the output. Self-grading agents need an external referee.

Blast radius decides investment

Before any agent runs unattended, the question to ask is: what is the worst outcome if this gets it wrong and nobody notices for a week?

A research pipeline that produces analysis a human reviews before action has a low blast radius. Duplicate records waste time. Wrong scores produce slightly worse decisions. Recoverable.

An agent that posts to public channels under your organisation's name has a high blast radius. A hallucinated claim becomes a screenshot.

An agent that modifies records, sends emails, or executes transactions has a higher blast radius again. Recovering from automated actions is harder than recovering from automated analysis.

Blast radius decides how much observability investment is justified. A low-blast-radius pipeline can run with a weekly log review. A high-blast-radius pipeline needs pre-execution checks, output validation, an approval gate before the action fires, and a defined rollback path. Skipping that calculus is how organisations end up explaining the incident to a steering committee that thought "we have observability" was an answer.

Where the gap closes

The platforms will catch up. Eval frameworks are getting cheaper to author. Native tracing is being built into the model APIs themselves. By 2027 most enterprise agent platforms will have semantic eval primitives that catch a meaningful share of the failure modes above.

That is a forward-looking statement, and right now most production agent deployments are running with monitoring that is loud about failures the agent admits to and silent about the ones it doesn't. The four patterns above will continue to slip through until the operational practice catches up with the available tooling.

If your organisation is rolling out agents into M365 or any other production environment and the conversation about silent failures has not happened yet, that's the conversation worth having before the first incident, not after. It's the kind of architecture work I do at joshwickes.com/services.